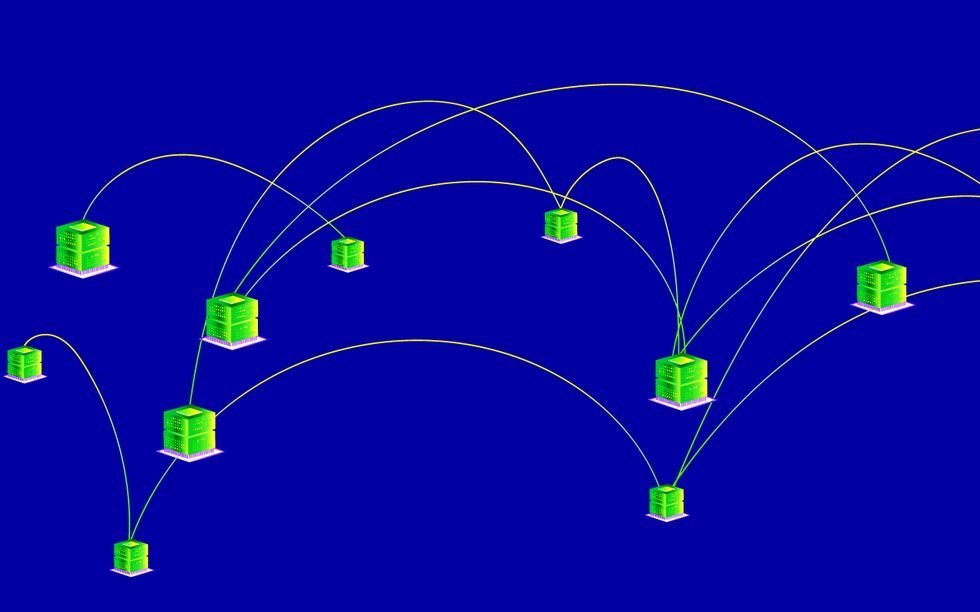

Artificial intelligence harbors an enormousenergy appetite. Such constant cravings are evident in thehefty carbon footprint of thedata centers behind the AI boom and the steady increase over time ofcarbon emissions from training frontierAI models.No wonder big tech companies are warming up tonuclear energy, envisioning a future fueled by reliable, carbon-free sources. But whilenuclear-powered data centers might still be years away, some in the research and industry spheres are taking action right now to curb AI’s growing energy demands. They’re tackling training as one of the most energy-intensive phases in a model’s life cycle, focusing their efforts on decentralization.Decentralization allocates model training across a network of independent nodes rather than relying on one platform or provider. It allows compute to go where the energy is—be it a dormant server sitting in a research lab or a computer in asolar-powered home. Instead of constructing more data centers that requ

UPVOTERS

Community appreciation

See who found this content valuable and showed their support.

TOPICS

Explore the same topics

Discover more content from the topics this post is mapped to.

Keep browsing

Explore more from this topic

Dive into the full feed of curated posts covering Robotics & Automation.

Discussion

Take the lead—comment now

Lead the way—your insights can inspire others.